One of the main feature why the storage systems became intelligent is by using the technique called RAID. A group of disk drives which combinely referred as an disk array are very expensive, have single point of failure and have limited IOPS. Most large data centers experience multiple disk drive failures each day due to increase in capacity and decrease in performance. To overcome these limitations, 25 years ago a technique called RAID is introduced for the smooth uninterrupted running of the data centers. A properly configured RAID will protect the data from failed disk drives and improve I/O performance by parallelizing I/O across multiple drives.

What is a RAID ?

RAID is abbreviated as Redundant Array of inexpensive/independent Disks (RAID) which is a technique in which multiple disk drives are combined into a logical unit called a RAID set and data is written in blocks across the disks in the RAID set. RAID protects against data loss when a drive fails, through the use of redundant drives and parity. RAID also helps in improving the storage system performance as read and write operations are served simultaneously from multiple disk drives.

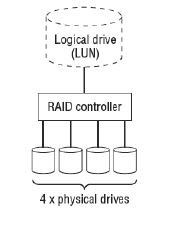

RAID is typically implemented by using a specialised hardware controller present either on the compute system or on the storage system. The key functions of a RAID controller are management and control of drive aggregations, translation of I/O requests between logical and physical drives, and data regeneration in the event of drive failures.

A RAID array is an enclosure that contains a number of disk drives and supporting hardware to implement RAID. A subset of disks within a RAID array can be grouped to form logical associations called logical arrays, also known as a RAID set or a RAID group.

A RAID array is an enclosure that contains a number of disk drives and supporting hardware to implement RAID. A subset of disks within a RAID array can be grouped to form logical associations called logical arrays, also known as a RAID set or a RAID group.

There are two methods of RAID implementation, hardware and software. Both have their advantages and disadvantages.

Software RAID

Software RAID uses compute system-based software to provide RAID functions and is implemented at the operating-system level. Software RAID implementations offer cost and simplicity benefits when compared with hardware RAID. However, they have the following limitations

- Performance: Software RAID affects the overall system performance. This is due to additional CPU cycles required to perform RAID calculations.

- Supported features: Software RAID does not support all RAID levels.

- Operating system compatibility: Software RAID is tied to the operating system; hence, upgrades to software RAID or to the operating system should be validated for compatibility. This leads to inflexibility in the data-processing environment.

Hardware RAID

In hardware RAID implementations, a specialised hardware controller is implemented either on the server or on the storage system. Controller card RAID is a server-based hardware RAID implementation in which a specialised RAID controller is installed in the server, and disk drives are connected to it. Manufacturers also integrate RAID controllers on motherboards. A server-based RAID controller is not an efficient solution in a data center environment with a large number of servers.

The external RAID controller is a storage system-based hardware RAID. It acts as an interface between the servers and the disks. It presents storage volumes to the servers, and the servers manages these volumes as physical drives. The key functions of the RAID controllers are as follows

- Management and control of disk aggregations

- Translation of I/O requests between logical disks and physical disks

- Data regeneration in the event of disk failures

Hardware RAID can offer increased performance, faster rebuilds, and hot-spares, and can protect OS boot volumes. However, software RAID tends to be more flexible and cheaper.

RAID Techniques

The three different RAID techniques that form the basis for defining various RAID levels are striping, mirroring, and parity. These techniques determine the data availability and performance of a RAID set as well as the relative cost of deploying a RAID level.

Striping: Striping is a technique of spreading data across multiple drives (more than one) in order to use the drives in parallel. All the read-write heads work simultaneously, allowing more data to be processed in a shorter time and increasing performance, compared to reading and writing from a single disk.

Mirroring: Mirroring is a technique whereby the same data is stored on two different disk drives, yielding two copies of the data. If one disk drive failure occurs, the data remains intact on the surviving disk drive and the controller continues to service the compute system’s data requests from the surviving disk of a mirrored pair. When the failed disk is replaced with a new disk, the controller copies the data from the surviving disk of the mirrored pair. This activity is transparent to the server.

In addition to providing complete data redundancy, mirroring enables fast recovery from disk failure. However, disk mirroring provides only data protection and is not a substitute for data backup. Mirroring constantly captures changes in the data, whereas a backup captures point-in-time images of the data. Mirroring involves duplication of data i.e the amount of storage capacity needed is twice the amount of data being stored. Therefore, mirroring is considered expensive and is preferred for mission-critical applications that cannot afford the risk of any data loss.

Mirroring improves read performance because read requests can be serviced by both disks. However, write performance is slightly lower than that in a single disk because each write request manifests as two writes on the disk drives. Mirroring does not deliver the same levels of write performance as a striped RAID.

In addition to providing complete data redundancy, mirroring enables fast recovery from disk failure. However, disk mirroring provides only data protection and is not a substitute for data backup. Mirroring constantly captures changes in the data, whereas a backup captures point-in-time images of the data. Mirroring involves duplication of data i.e the amount of storage capacity needed is twice the amount of data being stored. Therefore, mirroring is considered expensive and is preferred for mission-critical applications that cannot afford the risk of any data loss.

Mirroring improves read performance because read requests can be serviced by both disks. However, write performance is slightly lower than that in a single disk because each write request manifests as two writes on the disk drives. Mirroring does not deliver the same levels of write performance as a striped RAID.

Parity: Parity is a method to protect striped data from disk drive failure without the cost of mirroring. An additional disk drive is added to hold parity, a mathematical construct that allows re-creation of the missing data. Parity is a redundancy technique that ensures protection of data without maintaining a full set of duplicate data. Calculation of parity is a function of the RAID controller. Parity information can be stored on separate, dedicated disk drives, or distributed across all the drives in a RAID set.

Now, if one of the data disks fails, the missing value can be calculated by subtracting the sum of the rest of the elements from the parity value, parity calculation is a bit wise XOR operation.

Compared to mirroring, parity implementation considerably reduces the cost associated with data protection. Consider an example of a parity RAID configuration with four disks where three disks hold data, and the fourth holds the parity information. In this example, parity requires only 33 percent extra disk space compared to mirroring, which requires 100 percent extra disk space. However, there are some disadvantages of using parity. Parity information is generated from data on the data disk. Therefore, parity is recalculated every time there is a change in data. This recalculation is time-consuming and affects the performance of the RAID array.

As a best practice, it is highly recommend to create the RAID set from drives of the same type, speed, and capacity to ensure maximum usable capacity, reliability, and consistency in performance. For example, if drives of different capacities are mixed in a RAID set, the capacity of the smallest drive is used from each drive in the set to make up the RAID set’s overall capacity. The remaining capacity of the larger drives remains unused. Likewise, mixing higher speed drives with lower speed drives lowers the overall performance of the RAID set.

As a best practice, it is highly recommend to create the RAID set from drives of the same type, speed, and capacity to ensure maximum usable capacity, reliability, and consistency in performance. For example, if drives of different capacities are mixed in a RAID set, the capacity of the smallest drive is used from each drive in the set to make up the RAID set’s overall capacity. The remaining capacity of the larger drives remains unused. Likewise, mixing higher speed drives with lower speed drives lowers the overall performance of the RAID set.

Sponsored Links