iSCSI SANs are composed of initiators and targets connected by an IP network where server acts as the initiator or ISCSI host and the iSCSI storage device acts as the target or ISCSI array. These iSCSI initiators and targets require a physical interface to the network to transfer the data.

These interfaces are usually PCI devices that are either integrated to the server motherboard or included as PCI expansion cards. They connect to the intermediate TCP/IP network via copper or fiber-optic cables. Based on the performance and cost, there are different types of iSCSI initiator which can be used to deploy iSCSI SAN.

Types of iSCSI initiators

A standard NIC with software iSCSI adapter, a TCP offload engine (TOE) NIC with software iSCSI adapter, iSCSI HBA and Converged Network Adapter (CNA) are the four common types of iSCSI initiators

Standard NIC with software iSCSI adapter: The software iSCSI adapter is an operating system (OS) or hypervisor kernel-resident software that uses an existing NIC of the compute system to emulate an iSCSI initiator. It is least expensive and easy to implement because most compute systems come with at least one, and in many cases two embedded NICs. It requires only a software initiator for iSCSI functionality. Because NICs provide standard networking function, both the TCP/IP processing and the encapsulation of SCSI data into IP packets are carried out by the CPU of the server or host. This places additional overhead on the CPU. If a standard NIC is used in heavy I/O load situations, the CPU of the server might become a bottleneck.

TOE NIC with software iSCSI adapter: A TOE NIC offloads the TCP/IP processing from the CPU of a host and leaves only the iSCSI functionality to the CPU. The host passes the iSCSI information to the TOE NIC and then the TOE NIC sends the information to the destination using TCP/IP. Although this solution improves performance, the iSCSI functionality is still handled by a software adapter that requires CPU cycles of the compute system.

iSCSI HBA: An iSCSI HBA is a hardware adapter with built-in iSCSI functionality. It is capable of providing performance benefits over software iSCSI adapters by offloading the entire iSCSI and TCP/IP processing from the CPU of a compute system. This offloads all the TCP and iSCSI processing from the host CPU to the processor on the host bus adapter (HBA). They also have optional ROM which allows disk-less servers to be booted from the iSCSI SAN.

Converged Network Adapter: It offers everything that iSCSI HBA offers such as reduced pressure on host CPU and booting options but has the added versatility of being dynamically configurable for protocols other than iSCSI such as FCoE. This is more discussed in FCOE chapter.

Standard NIC is the most commonly used and simple to setup in test environment but you could not depend on it in real time critical applications. Deciding which of these is best for your environment may be depend upon the requirements such as cost and performance factors. However, all of these options appear to the OS as SCSI adapters, therefore the OS is unable to distinguish volumes presented through the iSCSI SAN from volumes on a local disk inside the server. This makes using iSCSI SAN with existing systems simple and easy.

iSCSI Connectivity types

The iSCSI implementations support two types of connectivity

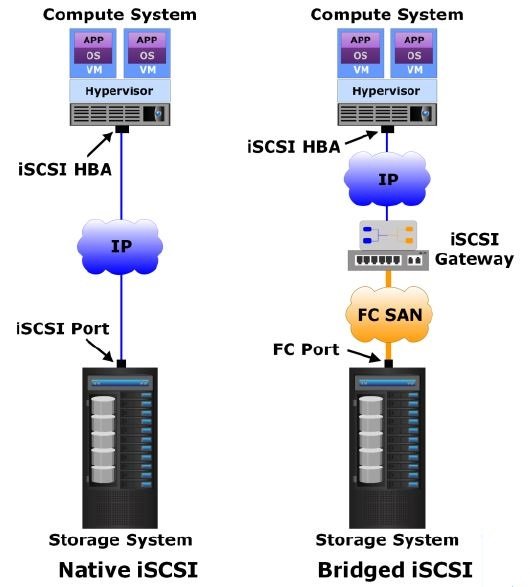

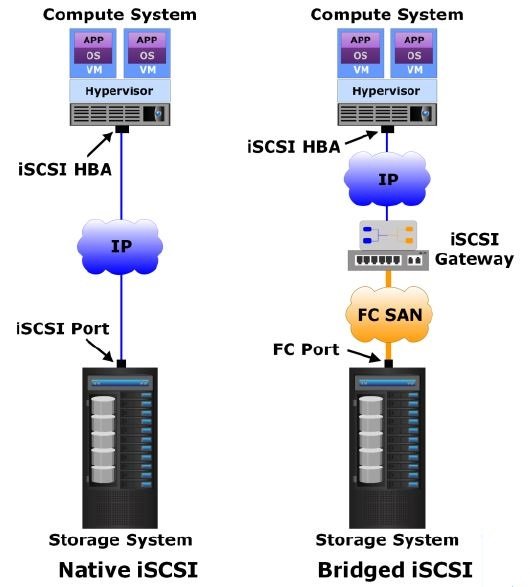

Native iSCSI: In this type of connectivity, the host with iSCSI initiators may be either directly attached to the iSCSI targets (iSCSI-capable storage systems) or connected through an IP-based network. FC components are not required for native iSCSI connectivity. Below figure shows a native iSCSI implementation that includes a storage system with an iSCSI port. The storage system is connected to an IP network. After an iSCSI initiator is logged on to the network, it can access the available LUNs on the storage system.

Bridged iSCSI: This type of connectivity allows the initiators to exist in an IP environment while the storage systems remain in an FC SAN environment. It enables the coexistence of FC with IP by providing iSCSI-to-FC bridging functionality. The above figure illustrates a bridged iSCSI implementation. It shows connectivity between a compute system with an iSCSI initiator and a storage system with an FC port.

As the storage system does not have any iSCSI port, a gateway or a multi-protocol router is used. The gateway facilitates the communication between the compute system with iSCSI ports and the storage system with only FC ports. The gateway converts IP packets to FC frames and vice versa, thereby bridging the connectivity between the IP and FC environments. The gateway contains both FC and Ethernet ports to facilitate the communication between the FC and the IP environments. The iSCSI initiator is configured with the gateway’s IP address as its target destination. On the other side, the gateway is configured as an FC initiator to the storage system.

Go To >> Index Page